How much risk tolerance does your enterprise have for AI agents? It is the question every CISO ends up answering. The spectrum is wide. At one end, startups running swarms of agents that touch everything with minimal oversight, optimizing for speed. At the other, highly regulated industries letting agents in carefully, one workflow at a time, with human approval on the actions that matter. Most enterprises sit somewhere between, and the right answer is per-deployment, not a corporate-wide setting.

For every deployment, the exercise is the same. Name the worst-case outcome. Apply a technical or human control to reduce it. Repeat. The mental model security teams should reach for is one they already run: treat agents as insider risk. Virtual employees with the privileges to do real work, the speed to make mistakes faster than a human could and no career incentive to slow down. The productivity story everyone wants to tell about agents is the same story that breaks if the risk is not managed.

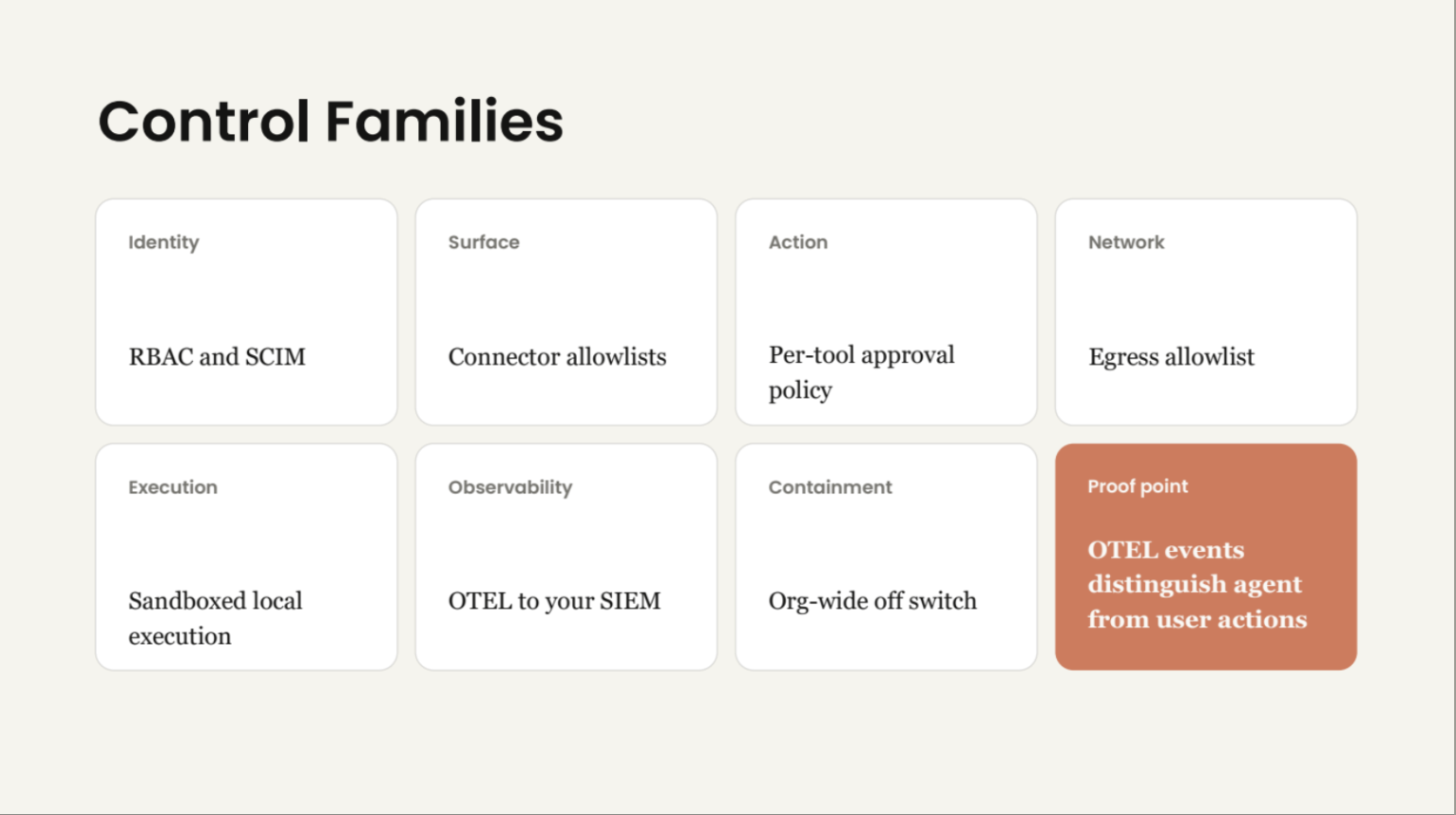

The framework that follows groups the controls into seven families: identity, surface, action, network, execution, observability and containment. The list is right. The implementation work is at the seams between them. We draw on Anthropic's CISO's Guide to Agentic AI, presented by Jason Clinton and Dor Fledel, and expanded each family into a longer reference framework in the new CISO Reference for Agentic AI. This post is the short version.

Agents are insider risk, not a chat surface

Insider risk programs already exist. Security teams run them. They have processes for over-privileged employees, anomalous activity, sudden data access patterns and accounts that should have been deprovisioned months ago. An agent inside your perimeter is the same mental model with three differences. It does not get tired, it does not stop at one wrong action and it cannot be deprovisioned by HR.

Concrete controls flow from the worst case. Which model family. Which model size. Reasoning-class models for high-stakes decisions, smaller and cheaper models for bounded tasks. Which tools the agent can reach: read-only catalog lookups for one role, destructive operations like row deletes or wire transfers gated behind human approval for another. Which data the agent can touch: private, trustworthy enterprise data inside the perimeter for one workflow, open-world web retrieval for another. Agentic policy is not one slider. It is a per-deployment matrix of model, tool and data choices, each one tuned to the blast radius the role can tolerate.

ISO 42001 sits behind this work for the auditors. The board and the CEO sit in front of it asking why adoption is not faster.

The shift the primitives are responding to

An LLM router secures what the model says. It rate-limits, content-filters, logs the request and routes between providers. The router is also where model selection happens, and models are getting more intelligent fast. The right router picks the right family for the job (reasoning-class for high-stakes work, smaller for bounded tasks) and the right hosting model: a frontier API straight from Anthropic, OpenAI or Google, or a VPC-hosted deployment on Bedrock, Azure OpenAI or Vertex AI for prompts that cannot leave the customer perimeter. The atom of control is the request. The failure mode is bad text.

An MCP gateway secures what the model does. Tool calls. Outbound requests. State changes. The atom of control is the action. The failure mode is real-world consequence. Wrong row deleted, wrong wire transferred, wrong email sent. The control families are the minimum vocabulary you need to talk about controls in that world.

Seven control families

The framework groups the controls into seven families that span identity, the agent's tool surface, the network, the runtime and the audit trail:

- Identity. Two modes, both anchored in customer systems. Human-in-the-loop user identity from your enterprise IdP (SAML, OIDC, SCIM) for interactive sessions. Ambient identity from workload identity federation for headless agents. RBAC and groups govern access in both modes.

- Surface. Connector allowlists. Which tools and connectors are even available for the agent to choose from.

- Action. Per-tool approval policy. The fine-grained call that says yes or no, with friction proportional to risk.

- Network. Egress allowlist. Which domains every tool can reach as a side effect of running.

- Execution. Sandboxed local execution. Where the agent runs code and how blast radius is contained.

- Observability. OTEL to your SIEM. The audit trail, with events that distinguish agent actions from user actions.

- Containment. Org-wide off switch. Per-user revocation, VM shutdown and the kill switch the board asks about.

From Anthropic's CISO's Guide to Agentic AI, presented by Jason Clinton and Dor Fledel.

The "OTEL events distinguish agent from user actions" piece is the proof point that ties the whole list together. Without it, the SOC cannot tell whether your last incident was a phishing attack on a human, a compromised agent or a tool with a bad default. With it, every other family in the list becomes auditable.

Network egress deserves its own asterisk. Egress allowlists at the perimeter are necessary but not sufficient. The traditional stack of SWG, SSE, CASB and DLP was built for users and apps. It can see who the user is and what device they are on, but it cannot tell which agent or sub-agent inside a multi-step plan is actually making the call. Agents already live inside the allowed perimeter, under a legitimate user's identity, and their intent is encoded in application-layer payloads no firewall was built to parse. We unpacked this gap at length in Trustworthy Agents Need Zero-Trust Infrastructure.

Observability has a second leg too. The SIEM is the cold path. Every action event lands in your audit pipeline for incident response and long-term analysis. The platform team and the SOC also need a hot path. Which agent is making which calls right now. Which LLM is approving what. Which MCP server is throwing errors. Ferentin's telemetry sink keeps recent data on hand for a bounded retention window and surfaces actionable insights across the trifecta of agents, LLMs and tools/MCP servers. No custom dashboards to build. No pipelining to maintain.

The LLM leg of the trifecta is itself a control point. Newer, larger reasoning models have more intelligence baked in, especially around resisting prompt injection. Visibility into which model handled which action lets the platform team route sensitive decisions to the model that can defend itself, not the cheapest one in the catalog.

Authority belongs to the customer

The most important claim in the framework is also the easiest to miss. Authority sits in customer-held systems. Not in the vendor's dashboard. Not in a SaaS console run by the agent provider. The systems that make and enforce the decisions are the ones the customer already runs.

- Your IdP governs identity and groups. SAML, OIDC and SCIM for human-in-the-loop user identity. Workload identity federation for ambient agent identity. The agent platform does not introduce a parallel user or workload directory.

- Your SIEM receives the telemetry over OTEL. The audit trail lands where your SOC already triages everything else.

- Your network policy governs egress. The agent runtime honors what the network already allows, not a vendor-managed allowlist that can drift.

- Your admins set per-tool approval policy, so friction matches risk on a deployment-by-deployment basis.

- Published standards, integrated with the stack you already run. No proprietary protocols at the points that matter.

This is the Ferentin thesis. If a vendor wants you to centralize policy, identity, telemetry or revocation in their cloud, that is the failure mode the customer-held framing is meant to prevent. Compromise the vendor and you compromise every customer at once. Anchor authority in the customer's own systems and the blast radius of any single vendor incident is bounded to that vendor.

Why the seams break first

Real deployments fail at the seams between the control families.

Identity ↔ observability. A denied tool call is the most security-relevant event in the system. If it emits a silent block instead of an audit event with the policy rationale, the SOC sees that something was denied but cannot reconstruct why. Policy tuning becomes guesswork. We have watched teams roll back capabilities they actually needed because their audit trail could not distinguish “policy correctly denied” from “policy bug.”

Network ↔ containment. Revoking a domain in policy is meaningless if the data plane reads its allowlist from a config file at startup. The seam between network and containment demands a hot-reload primitive on the policy bundle. Control plane signs and distributes, data plane caches and enforces, change takes effect on the next request. Without that, “revoke” means “file a ticket and wait for the next deploy window.” That is not a security control.

Identity ↔ network. Tools must inherit the calling agent's identity, not run as a shared service account. Otherwise the egress allowlist applies to the wrong principal, the audit log loses the chain of custody and any compromised tool becomes a privilege-escalation vector against every other agent that calls it. The mistake here is subtle and common. The LLM proxy has identity, the tool runner does not.

Observability ↔ action. You cannot tighten scope without observed usage. Which tools is this role actually using? Which permissions are dormant? Where are approvals clustering? Rollout decisions made without telemetry are guesses dressed up as decisions. Observability has to feed the action plane, not just the SOC's incident queue.

A vendor that solves one family cleanly is selling you a feature. A vendor that solves the seams is selling you a platform. Most products in the agentic AI security category today are in the first group.

What this looks like in practice

Four planes, each owning one or more control families:

- Identity plane. OIDC issuer, RBAC, AuthZEN PDP and vault. Implements identity and action.

- Control plane. Policy distribution, telemetry ingest, fleet management and containment switches. Implements admin-paced rollout and containment.

- Service Edge (data plane). LLM proxy, MCP gateway, surface allowlists, egress allowlist and sandboxed execution. Implements surface, network and execution. Runs at the customer edge so prompts and tool calls never leave the customer perimeter.

- Observability plane. Structured action events, per-tenant SIEM and OTEL routing, S3 sink. Implements observability.

The Service Edge in the customer perimeter is the one most teams underweight. The whole point of an agentic AI platform is that you never have to send a prompt or a tool call payload to the operator's cloud. Telemetry crosses, content does not. That is what makes the audit story tractable for healthcare, financial services, public sector and EU residency-bound workloads. We wrote that one up separately as well: Edge LLM Routing vs Cloud Gateway.

The procurement criterion that is forming

The buying criteria are forming in real time. The agentic vulnerability surface is widening faster than security teams can hire. Some are already calling it a vulnerability tsunami. Minutes to respond, not days or weeks. The AI SOC has to detect anomalous agent behavior, revoke individual users, shut down VMs and pull the org-wide off switch when something goes off the rails. Within twelve months, we expect “does this vendor implement the seven control families end-to-end, with the seams covered and authority in customer-held systems” to be a routine procurement question. Not a research question. A standard line on the security review template.

Three things you can do this quarter to stay ahead of that:

- Write the action audit schema you'll require from any vendor. Eight fields is fine. Insist that “deny” events carry the policy rationale and that the schema distinguishes agent actions from user actions. If your existing AI vendor cannot produce a sample, that is the answer.

- Demand a mid-flight revocation demo. Vendor calls reveal the seam between network and containment in two minutes. Ask the vendor to revoke a tool or domain on a running agent and show the next call hitting the new policy. If the answer involves a redeploy, you have your answer.

- Stop treating the data plane as the vendor's perimeter. For any workload touching regulated data, the prompt and tool-call payloads must stay in your VPC. The audit metadata can cross. The content cannot. Authority belongs to you.

The board and the CEO are pushing for adoption. The security team has to clear the path without leaving an agent loose inside the perimeter. The control families are the framework. The seams are the work.

The full reference, with a maturity ladder, vendor-evaluation checklist and the four-plane reference architecture, is in the CISO Reference for Agentic AI guide. If you'd like to see the same primitives running in your environment, book a demo.

Stay in the loop

Get the latest on enterprise AI security delivered to your inbox.